Colorado AI law focuses on governance, not gadgets

Sarah Mercer, Jack Hobaugh, Joshua Weiss from Brownstein Hyatt Farber Schreck, LLP.

Sarah Mercer, Jack Hobaugh, Joshua Weiss from Brownstein Hyatt Farber Schreck, LLP.

Colorado AI law focuses on governance, not gadgets

Companies doing business in Colorado still have time before SB 24-205 takes effect on June 30, 2026, but the real work is already here: inventorying AI tools, identifying high-risk uses and building governance that can withstand scrutiny.

In Brief:

- Colorado AI law takes effect June 30, 2026, targeting high-risk systems

- Businesses must assess AI use in decisions like hiring, lending and housing

- Law requires risk management programs, disclosures and human review

- Companies urged to inventory AI systems and build governance now

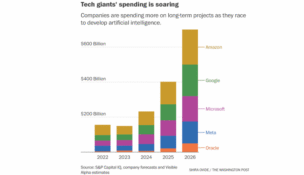

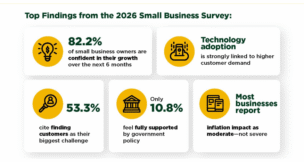

Businesses have spent the last two years implementing artificial intelligence (AI) and agentic AI in large and small ways. Recruiters use it to screen applicants. Lenders use it to sort out risk. Landlords and insurers use it to arrive at decisions once reserved for careful human analysis of market trends. With those systems largely in place, what changes now is that AI legal policy in Colorado is no longer a distant policy debate: It is an operational and compliance issue.

Senate Bill 24-205, Colorado’s Anti-Discrimination in AI Law, was enacted in 2024 and later delayed, with the effective date set for June 30, 2026. The law is aimed at one core problem: algorithmic discrimination in high-impact decisions. It does not regulate every chatbot, drafting tool or internal productivity aid. It focuses on high-risk AI systems used to make, or to be a substantial factor in making, consequential decisions in areas like employment, housing, lending, insurance, education, legal services and essential government services.

That distinction matters. For many companies, the first compliance question is not whether they use AI. For an ever-growing number of companies, that answer is already yes. The real question is whether they use AI in a way that can materially shape high-risk, highly impactful decisions, into which biases or other preconceived weighting could emerge, given how AI and LLM systems function and the way the underlying model is trained.

If the answer to the second question is yes, this law deserves immediate attention.

The statute places obligations on two groups: developers and deployers. Developers are entities that create or intentionally and substantially modify high-risk systems. Deployers are businesses that use those systems in the real world. Both must use reasonable care to protect consumers from known or reasonably foreseeable risks of algorithmic discrimination. The law also builds in a rebuttable presumption of reasonable care for companies that satisfy the statute’s specific requirements.

For deployers, that means much more than posting a policy and moving on. Covered businesses are expected to implement a risk management policy and program, complete impact assessments, review their systems annually, provide certain notices to consumers, offer a way to correct incorrect personal data, and provide an appeal process for adverse consequential decisions with human review where technically feasible. They must also make certain public disclosures and report any discovered algorithmic discrimination to the Colorado attorney general.

Developers have their own checklist. They must provide downstream deployers with information about the system, documentation needed for impact assessments, public disclosures about the types of high-risk systems they make available, and notices about known or reasonably foreseeable risks of algorithmic discrimination.

There is also a broader transparency rule that reaches beyond the high-risk category. A company doing business in Colorado that deploys an AI system intended to interact with consumers, such as services provided via a website, must disclose that the consumer is interacting with AI. That requirement sounds simple, but it has practical consequences for customer service tools and consumer-facing digital assistants.

The mistake businesses can make at this point is treating the law as a purely technical problem. It is really a governance problem. Compliance will not be met by asking IT whether a model is biased or by asking legal to draft a one-page policy. The businesses that are best positioned by June 30 will be the ones that can map where AI is being used and the associated use cases, control shadow AI, identify which tools touch consequential decisions, document ownership, define review procedures, and align procurement, legal, HR, compliance and business leaders around a common process.

That process should start now, even though formal rulemaking remains in motion on the horizon and further legislative refinement changes remain a possibility. As of early March, the Colorado attorney general’s office has solicited pre-rulemaking input, but formal notice and comment rulemaking has not yet begun. That means some details may still evolve. It does not mean companies should wait. By the time final rules arrive, businesses that have not already inventoried systems and assigned internal accountability may find themselves trying to build a compliance program under deadline pressure.

A practical first step is an AI inventory and an associated AI Users Policy that specify permitted AI use cases, preferably assigned by role rather than an individual. Not a glossy innovation list, but a disciplined record of systems that influence consumer outcomes. From there, companies can triage which tools are likely low risk, which are consumer-facing and require disclosure, and which may fall into the statute’s high-risk framework.

Vendor contracts should then be revisited with fresh eyes. If your company is the deployer, can you actually obtain the documentation the law expects developers to provide? If you are the developer, are you prepared to furnish it?

Colorado’s AI law is best understood as an early signal of where mandatory compliance is headed. Similar California Consumer Privacy Act (CCPA) “Automated Decisionmaking Technology” (ADMT) regulations become effective Jan. 1, 2027, with enforcement by a diligent California Privacy Protection Agency (CPPA) that has formed coalitions with other states, including Colorado. Customers, regulators and counterparties increasingly want the same thing: proof that AI-enabled decisions are being governed in a disciplined and explainable way.

Businesses that build that discipline will now be in a better position for compliance and for trust. And in the AI economy, trust may turn out to be the most valuable asset of all.

l